August 2026. In five months, high-risk AI systems must be fully compliant with the EU AI Act. Your planning module, your quality control, your chatbot: does it fall under high risk? Most organisations don't know. This article gives you everything you need to know: from risk levels and deadlines to concrete steps you should take now.

What is the AI Act?

The EU AI Act is the world's first comprehensive AI law. Where the GDPR governs how you handle personal data, the AI Act governs how you build, deploy and use AI systems.

The principle is simple: the greater the risk an AI system poses to people, the stricter the rules. The law doesn't look at the technology itself, but at what the system does and what impact it has.

The law applies to everyone who builds, sells or uses AI systems within the EU. Whether you're a tech company developing AI agents, a hospital deploying diagnostic AI, or a factory monitoring employee performance with AI.

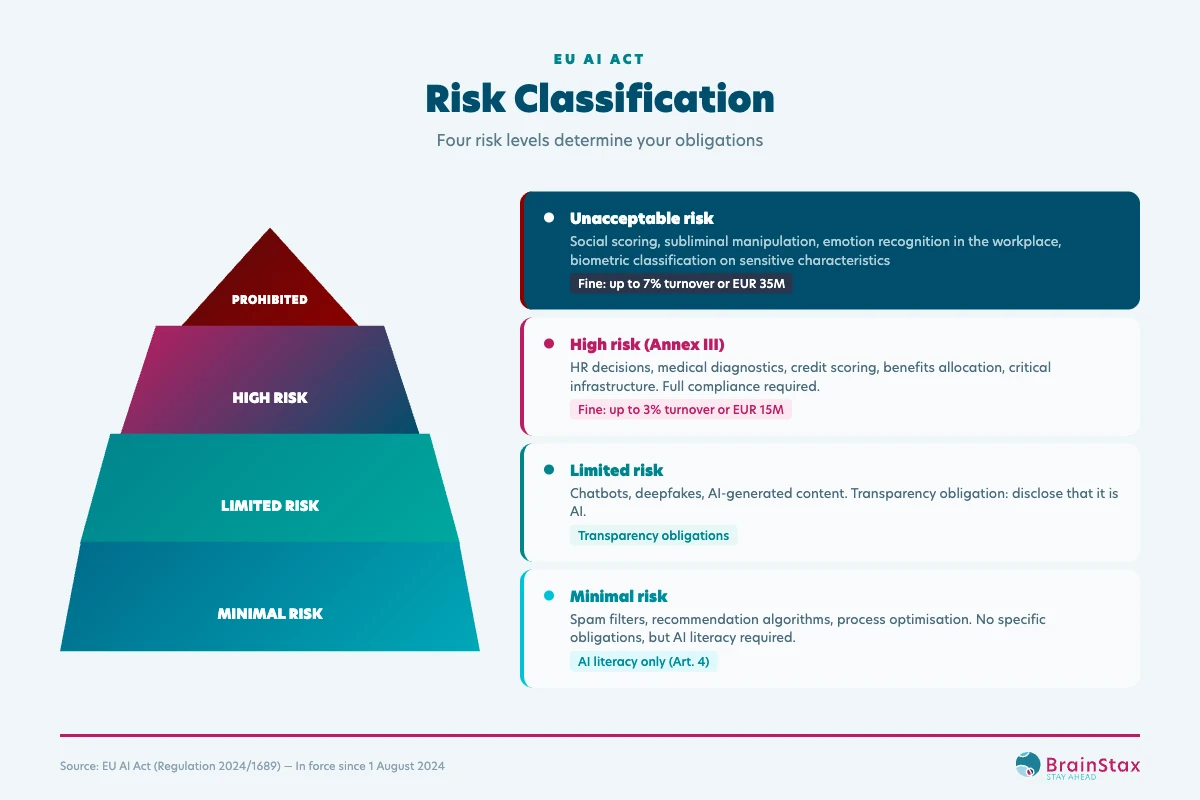

The four risk levels

The AI Act divides all AI systems into four categories. Each level carries different obligations.

Prohibited (unacceptable risk)

Some AI applications are simply banned. No compliance pathway, no exceptions. This has been in effect since 2 February 2025.

- Social scoring by governments. Rating citizens based on their behaviour.

- Emotion recognition in the workplace. AI that analyses employee emotional states. In factories, warehouses, offices. Prohibited.

- Subliminal manipulation. AI that influences behaviour without people realising it.

- Biometric surveillance. Real-time facial recognition in public spaces (with narrow exceptions for law enforcement).

The fine: up to 7% of global annual turnover or EUR 35 million, whichever is higher.

High risk (Annex III)

This is the category where it gets real for most organisations. AI systems that make or influence decisions in areas that directly affect human rights, safety or fundamental rights.

The most relevant domains for manufacturing, healthcare, public sector and logistics:

- Employment (recruitment, performance evaluation, task allocation, dismissal)

- Critical infrastructure (energy, water, transport)

- Essential services (credit, insurance, benefits, emergency services)

- Biometrics (identification, categorisation)

Additionally, education, law enforcement, migration/border control and justice also fall under high risk.

These systems require a full compliance package: risk management, data governance, technical documentation, human oversight, conformity assessment with CE marking, and ongoing post-market monitoring. Fine for non-compliance: up to 3% of turnover or EUR 15 million.

Limited risk

AI systems that interact directly with people must be transparent. Specifically: you must disclose that it's AI. This applies to chatbots, AI-generated text, images, audio and video (deepfakes).

No heavy compliance obligations, but a legal transparency requirement.

Minimal risk

Spam filters, recommendation algorithms, internal process optimisation. No specific AI Act obligations. However, all organisations using AI must ensure AI literacy (Article 4): your planners, team leads and quality managers must have sufficient knowledge of the AI systems they work with.

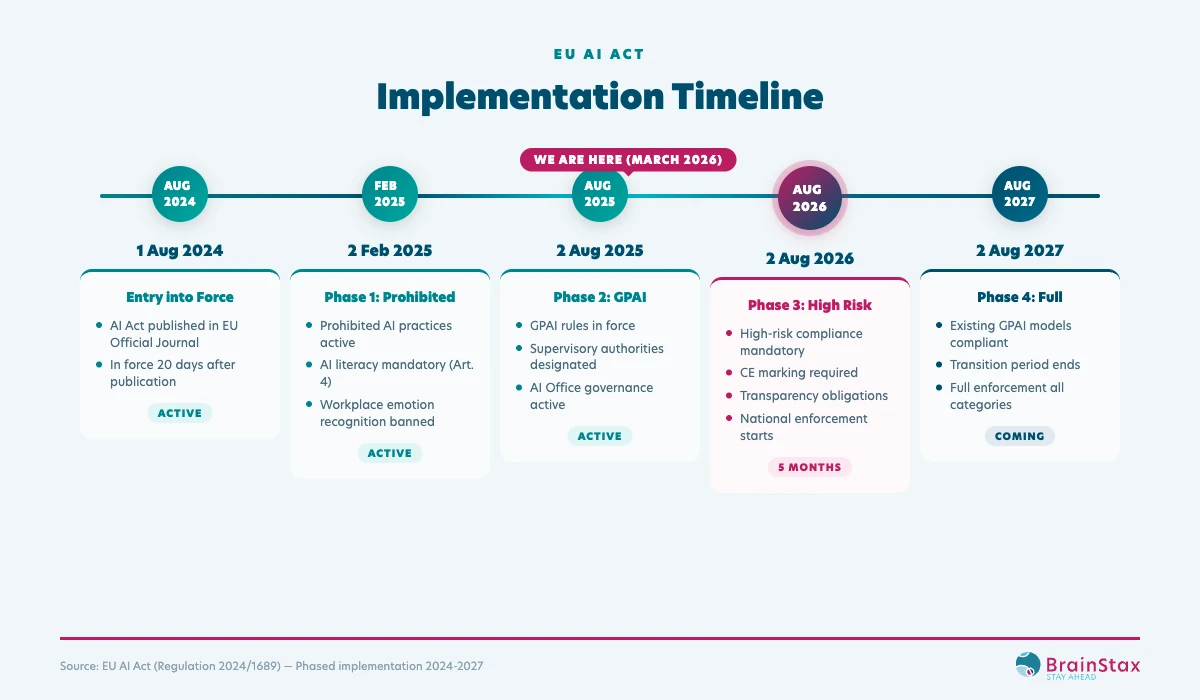

Timeline: what applies now?

The AI Act works with phased deadlines. Some obligations already apply. Others are coming.

Already active (since February 2025)

- All prohibited AI practices are banned. Full stop. If your organisation uses emotion recognition in the workplace, you're already in violation.

- AI literacy is mandatory. Everyone working with AI must have sufficient knowledge. This is a legal obligation, not a recommendation.

Active since August 2025

- GPAI rules (General-Purpose AI) are in force. Providers of foundation models like GPT-4 and Claude must publish technical documentation and training data summaries.

- National supervisory authorities have been designated. Five in the Netherlands (more on that later).

Coming: 2 August 2026

This is the big deadline. From this date:

- High-risk AI systems must be fully compliant

- CE marking and EU database registration are mandatory

- Transparency obligations for chatbots and AI content become active

- National enforcement begins at full capacity

That's five months away. The European Commission proposed in November 2025 (the "Digital Omnibus") to potentially postpone this deadline to December 2027. But that's a proposal, not law. Plan for August 2026.

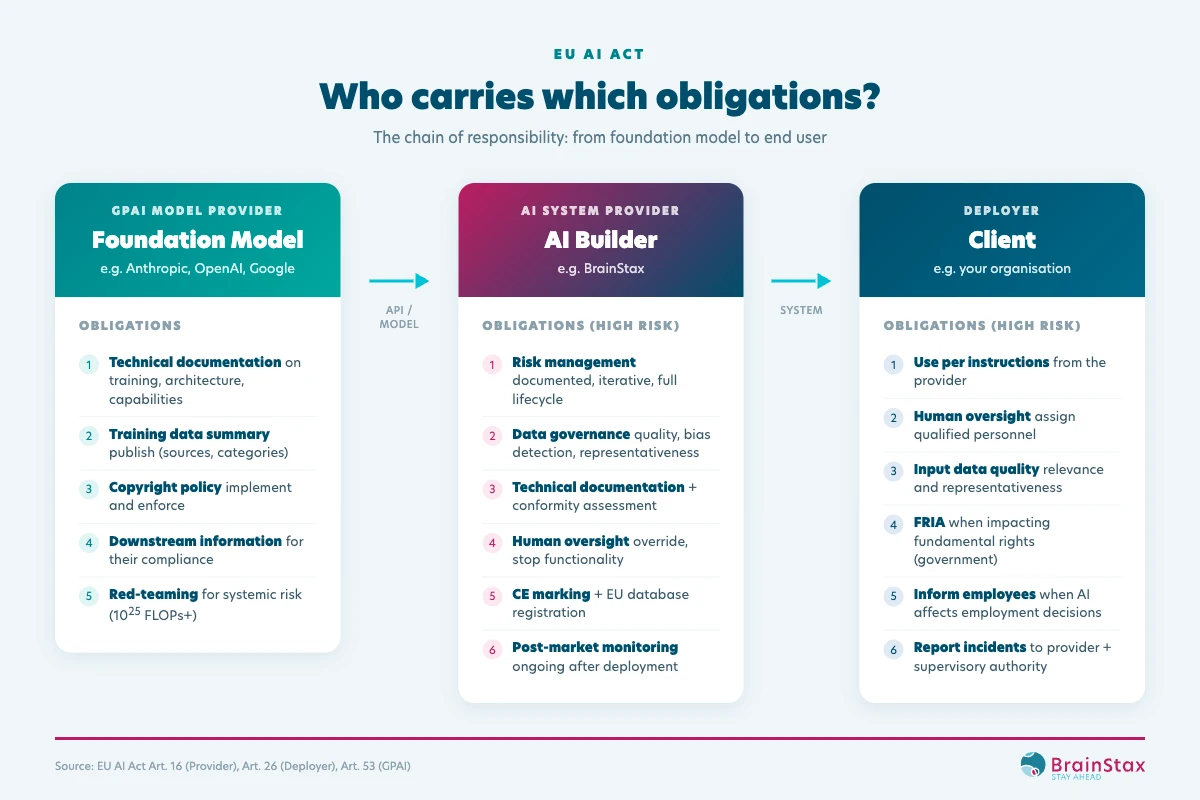

Who carries which obligations?

The AI Act distinguishes between three roles. Each role carries different obligations. Know which role your organisation plays. The obligations depend on it.

GPAI Model Provider

Companies like Anthropic (Claude), OpenAI (GPT-4) and Google (Gemini). They build the foundation models that AI applications run on. Their obligations: technical documentation, training data summaries, copyright policy, and information to downstream users.

AI System Provider (the builder)

This is the party that designs, builds and places an AI system on the market. Think of your AI vendor, your implementation partner, or your own development team if you build AI in-house. The provider carries the heaviest obligations:

- Documented risk management throughout the entire lifecycle

- Data governance: quality, bias detection, representativeness of training data

- Technical documentation: architecture, performance metrics, test results, limitations

- Human oversight: built-in override capability and stop functionality

- Conformity assessment with CE marking

- Post-market monitoring: ongoing monitoring after deployment

Deployer (the user) — this is probably you

The organisation that deploys an AI system under its own responsibility. Do you purchase an AI solution from a vendor and use it in your operations? Then you're the deployer. And that comes with its own obligations:

- Use the system according to the provider's instructions

- Assign qualified personnel for human oversight

- Ensure quality input data

- Conduct a Fundamental Rights Impact Assessment (FRIA) if the system affects fundamental rights

- Inform employees when AI influences employment decisions

- Report incidents to the provider and supervisory authority

Important: if you as deployer substantially modify the system, use it outside its intended purpose, or offer it under your own name, you automatically become the provider. With all corresponding obligations.

What does this mean per sector?

Risk classification depends on what your AI system does, not your sector. But some applications are more common in certain sectors. This overview shows where the risks lie.

Manufacturing

Good news: many AI applications in manufacturing fall under minimal risk. Optimising production schedules, predictive maintenance, yield optimisation. No compliance package needed for those.

But watch out. Once AI monitors or evaluates employee performance (individual output, productivity scores, absence patterns), it's high risk. And if AI controls safety components in machinery (robotic arms, conveyor systems, emergency stops), it falls under the Machinery Regulation. That's also high risk.

And emotion recognition in the workplace? Prohibited. No discussion. Learn more about AI in manufacturing.

Healthcare

Healthcare is hit hardest. Virtually every AI application that influences clinical decisions is high risk. Diagnostic support, triage, treatment recommendations: all fall under Annex I (medical devices) or Annex III (essential services).

Add to that the fact that healthcare AI almost always processes special category personal data. That means alongside the AI Act, the GDPR applies in full force. Double compliance burden.

Administrative AI (scheduling, billing, logistics) is typically minimal risk. But once it influences access to care, it shifts to high risk. See how compliant AI works in healthcare.

Public sector

Governments carry extra responsibility due to citizens' fundamental rights. AI that assesses benefits, performs fraud detection, or makes semi-automated decisions about citizens is high risk.

Informational chatbots that guide citizens? Limited risk, with transparency obligation. But once such a chatbot refers to specific services or influences application processes, it can shift to high risk.

Public deployers must additionally conduct a Fundamental Rights Impact Assessment (FRIA) before deploying high-risk AI. And they must inform employee representatives. Learn more about AI in the public sector.

Logistics

Route optimisation, load planning, demand forecasting: minimal risk. The core of logistics AI falls outside the heavy obligations.

But here too: employee performance monitoring in warehouses is high risk. AI that evaluates drivers on driving behaviour and links that to employment consequences? High risk. Autonomous warehouse robots controlled by AI? Check the Machinery Regulation. Explore AI applications in logistics.

GPAI and foundation models

Does your organisation use ChatGPT, Claude, Gemini or another foundation model to build AI applications? Then you fall under the GPAI rules.

The division is clear. The foundation model provider (OpenAI, Anthropic, Google) delivers the technical documentation, training data summaries and information you need for your own compliance. You, as the builder of the resulting AI system, classify that system based on the four risk levels and carry the corresponding obligations.

Concretely: if you use Claude via the API to build an AI agent that evaluates employee performance in a warehouse, then Anthropic is the GPAI provider and you are the provider of a high-risk AI system. With everything that entails.

The GPAI rules have been active since August 2025. The European Commission published guidelines in July 2025 and a voluntary GPAI Code of Practice is available.

The AI Act and GDPR

The AI Act and GDPR are two separate laws that exist side by side. When an AI system processes personal data, both apply. In case of conflict, the GDPR takes precedence.

In practice, this means double compliance:

- The GDPR requires a Data Protection Impact Assessment (DPIA) for high-risk processing

- The AI Act requires a conformity assessment for high-risk systems

- For public deployers, a Fundamental Rights Impact Assessment (FRIA) is added

This isn't a theoretical problem. Seven of the eight Annex III high-risk categories involve significant processing of personal data. Anyone deploying high-risk AI almost always deals with both laws.

The bright side: many principles overlap. Data quality, transparency, human oversight. Anyone who seriously implements the AI Act simultaneously lays a solid GDPR foundation.

Dutch supervisory authorities

The Netherlands has designated five supervisory authorities for the AI Act. This is deliberate: each sector receives specialist oversight.

- Dutch Data Protection Authority (AP): AI involving personal data, co-coordinator

- Digital Infrastructure Inspectorate (RDI): market surveillance of AI systems

- Authority for Consumers and Markets (ACM): consumer-facing AI and competition

- Financial Markets Authority (AFM): AI in financial services

- De Nederlandsche Bank (DNB): AI in banking

The National AI Implementing Act has been enacted. The AP and RDI have inspection and sanctioning powers, including access to source code. That last point is new. During an inspection, the regulator can request to see your code.

The Netherlands is leading the way. The AP already took enforcement action against algorithmic discrimination at the Tax Authority and fraud detection in social security before the AI Act. The supervisory authorities are not passive.

Fines

The AI Act has three fine categories:

| Category | Maximum fine | Percentage of turnover |

|---|---|---|

| Prohibited AI practices | EUR 35 million | 7% global annual turnover |

| Non-compliance with high-risk obligations | EUR 15 million | 3% global annual turnover |

| Providing incorrect information to authorities | EUR 7.5 million | 1% global annual turnover |

For comparison: the GDPR maxes out at EUR 20 million or 4%. The AI Act goes structurally further. An organisation with EUR 1 billion in turnover risks a fine of EUR 70 million for prohibited practices. EUR 30 million for high-risk non-compliance.

These aren't theoretical maximums. The AP has already imposed GDPR fines of tens of millions. There's no reason to assume AI Act fines will be milder.

What should you do now?

Regardless of your sector: there are steps you need to take now. Not in 2027. Now.

Immediately (Q1 2026)

- AI inventory: map all AI systems you use, manage or have built for you.

- Risk classification: determine per system whether it's prohibited, high, limited or minimal risk. Document your reasoning.

- AI literacy: formalise your policy. This has been mandatory since February 2025.

- GPAI usage: document which foundation models you use, how and for what purpose.

- Contracts: check your contracts with AI vendors. Do they specify who is provider and who is deployer? Is compliance documentation part of the agreement?

Second quarter 2026

- Compliance documentation for high-risk systems

- Risk management framework (documented, iterative)

- Data governance formalisation (quality requirements, bias detection, representativeness)

- Bias testing protocol (testing across demographic groups)

Before August 2026

- Conformity assessments completed for high-risk systems (together with your AI provider)

- Technical documentation complete per system — request this from your vendor

- Post-market monitoring established: who monitors, who escalates, who reports?

- Inform employees when AI influences employment decisions

Don't know where to start? Begin with an AI inventory. Map which AI you use, who the provider is, and which risk level applies. That alone gives you a clear picture of what needs to happen.

The opportunity

So far, the AI Act sounds mainly like an obligation. It is. But it's also an opportunity.

Compliance forces you to document your AI usage, assess risks and ensure quality. These aren't bureaucratic exercises. They're precisely the steps that separate AI that delivers value from AI that fails. In a market where 80% of AI projects fail, a structured approach gives you an edge over competitors deploying AI ad hoc.

Moreover: clients, regulators and partners increasingly demand demonstrable AI governance. Those who sort this out now won't need to catch up in a panic later. And those who choose an AI partner that delivers compliance as part of their service have one fewer problem to solve. See what to expect from a good AI partner.

People, Data, Technology: the right framework for compliance

The AI Act seems complex, but the core is simple. Every compliance question breaks down along three axes: People, Data and Technology. Always in that order.

- People — Human oversight (Article 14). Who in your organisation is responsible? Do your planners, team leads and quality managers have sufficient AI knowledge? Are stakeholders involved in decisions that AI makes?

- Data — Data governance (Article 10). What goes into your AI systems? Is the data qualitative, representative, free from bias? Does data that reports also become data that works?

- Technology — Technical robustness (Article 15). Are your systems tested? Is there an override? Is it running in production or still as a pilot?

This isn't a theoretical model. The AI Act legally mandates what good AI implementation has always required. Those who deploy AI with people as the starting point, who take data quality seriously, and who only deploy technology once the foundation is in place, already have a strong basis for compliance.

Start your AI Act readiness check along these three axes. Take our free AI Act Readiness Scan and discover in 3 minutes where your organisation stands. You'll find that most of the work isn't in technology, but in people and processes.

Frequently asked questions

Does the EU AI Act apply to small organisations?

Yes. The AI Act applies to everyone who builds, sells or uses AI systems within the EU, regardless of company size. Only for providers of AI systems with turnover below EUR 10 million is there a limited exemption for conformity assessment.

What is the deadline for high-risk AI systems?

2 August 2026. From that date, high-risk AI systems must be fully compliant with documentation, CE marking and post-market monitoring. Compliance trajectories for high-risk systems typically take 6 to 9 months. Time is tight.

What is the difference between an AI provider and a deployer?

A provider builds and distributes the AI system and carries the heaviest obligations. A deployer uses the system under its own responsibility. If you purchase an AI solution and deploy it in your operations, you are the deployer.

What does non-compliance cost?

Prohibited AI practices: up to EUR 35 million or 7% of global annual turnover. Non-compliance with high-risk obligations: up to EUR 15 million or 3%. That is structurally higher than GDPR fines.

Next step

Already a BrainStax client? Then you don't need to worry about provider compliance. We ensure that the AI systems we build for you comply with the AI Act. That's part of our partnership. We'll tackle your deployer obligations together.

Not yet a client? Then you need to take action now. August 2026 is coming fast. Start with our free AI Act Readiness Scan: 6 questions, 3 minutes, and you'll know exactly where you stand. Classify your systems and check whether your current AI vendor can guarantee compliance. Here's what to look for.

Prefer to read more first? Download our paper on hypereffective AI implementation and discover how People, Data and Technology form the foundation for AI that works and is compliant.